Circular economy

20 minute read

How humankind forgot about energy sustainability

Circular economy is the planet’s natural order that humankind once forgot. In the quest to transform our energy system back to carbon neutral, scientists are now turning to ancient inventions to inspire the next 100 years of sustainable energy production.

Expect to learn:

Why circular economy is an age-old pursuit – and how humankind forgot about it

Which innovations by pre-historic and ancient cultures laid foundation for our emerging 21st century energy system

100 years from now, will future energy historians say we did enough to stop climate change

As you fly over the vast oceans of the globe, you may witness a sight that looks like something out of a sci-fi drama set: vast armies of giant wind turbines assembled ramrod-straight in formation, their blades hoisted to attention and jutting out of the water. It’s hard to imagine the futuristic image has anything to do with ancient history. Yet, you’re witnessing the end-product of innovation that started thousands of years ago.

Throughout our human history, the existence of our species has depended on our ability to produce energy. What we may not recognize – at least not at first glance – is that many of the technologies we see today as the future of sustainable energy production were once, in fact, the past. Before we developed our fossil energy addiction, humans managed to sustainably harness the overwhelming force of nature for millions of years. That’s a mindset we now need to relearn.

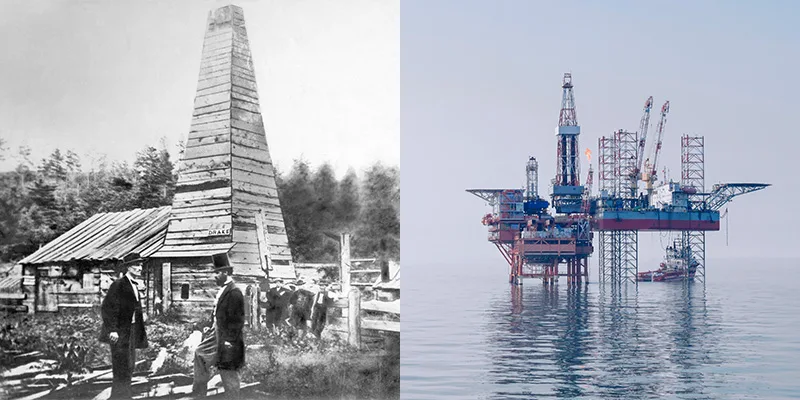

How fossil energy came to dominate the world

When the first commercial oil well was tapped in North America in the 1850s, it pumped the oil industry into existence, overhauling the way we lived, worked and travelled. However, crude oil was not a new acquaintance: By then, ancient cultures had used oil for millennia, for example for waterproofing, creating flaming weapons and medicinal purposes. The Chinese were reportedly the first ones to discover underground oil deposits in salt wells, with Chinese history describing oil wells over 100 feet deep as early as 500 BCE. However, it wasn’t until the industrial revolution that fossil raw materials turned into a vital part of our lives.

In the 19th century, we began to use coal first to power steam engines and later for electricity, expanding its use to homes, transport and factories alike. Mid-century, the technology of refining oil was invented, and suddenly crude oil could be transformed into useful products, such as kerosene and petroleum. At the turn of the 20th century, the entrée of personal automobiles radically increased gasoline demand, encouraging further development in oil refining. Natural gas was first commercially produced to light houses and streets in England in the late 18th century, and during the next two centuries, we expanded its use to produce heat and electricity.

Image: The first commercial oil well, built in Titusville, Pennsylvania in 1859, was the prototype for modern oil platforms.

The birth of the fossil fuel industry was a catalyst for enormous change and great opportunity. Populations grew and quality of life improved. But little did we know how damaging the advancement could be to the environment, and how short-lived the era of fossil energy would eventually remain.

While the expression circular economy only began to spread in the 1970s, until the beginning of the fossil era, we had lived according to circular economy principles; living off the land, replacing any energy sources we used at roughly the same rate we consumed them in the big picture.

The fossil energy didn’t make sense, and we realized – nearly too late – our folly.

However, fossil raw materials take millions of years to form, and just a matter of minutes to burn. The equation didn’t make sense, and we realized – nearly too late – our folly.

“A little blip in the grand scheme of human history”

While the fossil oil and gas era may now seem like all we’ve ever known, historians will eventually look back at our fossil reliance as an aberration in the long history of human creation and use of energy – a Promethean addiction that keeps burning us time and time again by torching the planet on which we live.

“It’s physically impossible that the age of fossil hydrocarbons could be anything other than a little blip in the grand scheme of human history,” says Professor Christopher Jones of the Arizona State University Global Institute of Sustainability. The big question, says Jones, “is what comes next.” Jones is the author of Routes of Power (Harvard, 2014), a book on the causes and consequences of the fossil fuel revolution, and a leading researcher on the historical and social dimensions of energy systems.

The small “blip” – the hydrocarbon, fossil fuel era – belies a broader smooth advancement of alternative, sustainable energy sources. But we’re not “returning” to historical sources, reckons Paul Warde, Professor of environmental history at the University of Cambridge, “because the way we harness them is very different.”

Becoming disconnected from energy production

Until the 19th century, we devoted much of our landscape to energy consumption – even though it wasn’t thought of as such. We sowed crops in fields to grow food to give us basic calories. The reserves of woodland and peatlands around our villages were the drivers of our economy.

Then we became refracted away from our energy sources.

We’re turning back to energy sources previously dismissed as old-fashioned.

The fossil energy era, sparked in the 1860s, allowed us to transport energy across space in the form of coal, oil and gas. A major societal shift was when energy production became focused on a limited number of specific sites, often placed away from the environment in which we lived: these were our mines, our refineries, and our power stations. “People became disconnected from the way energy was obtained, and one could argue any sense of its impact in their daily lives,” says Paul Warde of the University of Cambridge.

That’s all changing today. Recognizing the detrimental effect that overusing virgin fossil raw materials has on our planet, we’re starting to turn back to various once-shunned inventions and energy sources that we previously dismissed as too inefficient or simply old-fashioned – with new eyes, and new technologies.

The downside of burning things

The precise time, date and location of the first fire ever deliberately set has been lost in the annals of time – a victim of occurring in pre-history, during the Palaeolithic era, which began around 2.5 million years ago. We know that the fire was made using wood: the first use of biomass as an energy source known to man.

That first recorded fire occurred in the Wonderwork Cave in north South Africa around a million years ago.

Scholars are torn as to whether that fire – which we know was deliberately set based on the debris that has survived through the years – was the first example of wood being used as an energy source. (Other, less well-cited examples pinpoint the first use nearly 500,000 years earlier.) But it would have heated a cave, cooked food, and provided light in the darkness for all those who gathered round it. It provided a setting for socializing through the night, giving birth to the art of storytelling.

The fire triggered a revelation in our ancient ancestors: set fire to wood and you could get heat and light from it – both of which could be harnessed for good.

The human DNA adapted to make us more resilient to fumes.

There was, of course, a catch: burning biomass comes with a cost to our environment and health – a cost we’ve only recently learned to start mitigating.

Almost as quickly as humans started to set fire to wood to provide heat and light, they also started to suffer from the new toxins they were exposed to. Eventually, human DNA adapted to make us more resilient to fumes, providing modern humans with a genetic mutation that allows us to metabolize certain toxins found in smoke at a safe rate. Recent research also suggests that gathering closely around campfires may have sparked the spread of tuberculosis – a disease that might have killed more people than wars and famine combined.

Yet, at least in the short term, the benefits of burning outweighed the negatives – an attitude humankind has taken since to multiple energy sources that appear to be revelatory at first but turn out to have a twist in the tale.

From cavemen’s fires to future-facing biomass

Despite the hazards, the use of biomass has stayed constant throughout history, particularly in developing countries, where it’s still the main or only source of energy to heat homes and cook food, as well as in developed countries like Finland and Sweden that have significant forest industry. But today, a range of energy companies and research institutions are looking at biomass with new eyes.

Image: From cavemen’s campfires to transforming agricultural waste into valuable products, biomass has remained a constant throughout the history of human energy production.

As mindless as it may sound, for the first time in human energy history, we have learned to largely utilize not just the prime bits of biomass, but the residues, too.

Today, our timber, papermaking and agriculture produce huge amounts of lignocellulosic residues, such as forest harvesting residues or straw, that can be used to produce heat and power. “This is a large, yet partly untapped, raw material pool that is one significant element in reducing the use of crude oil in the production of fuels, polymers and chemicals globally,” says Perttu Koskinen, Vice President of Discovery and External Collaboration at Neste.

Utilizing those residues may sound simple, but it’s not.

While Neste has for over a decade commercially transformed other kinds of renewable waste and residues, such as animal fat waste and used cooking oil, for example, into high quality renewable fuels for road transport and aviation, utilizing the hard residues from agriculture and forestry requires different advanced technologies. Those have been around for a little less than a decade and are only now approaching commercial stages.

For Neste, the current innovation goal is to unlock the potential of several new, globally scalable raw materials, including algae, forestry and agricultural waste and residues, as well as municipal waste, to name a few. Neste aims to transform these renewable materials into more refined products, such as high-quality renewable fuels, as well as high-quality chemicals and building blocks for plastics.

Luckily, widening the pool of raw materials isn’t the only way humanity is now shaking off the long hangover of the fossil energy age. We’re also writing a plot twist in the tale of electricity.

Wind power’s human hurdles

The onshore and offshore wind arrays that dot the land across Europe, the United States and China are a far cry from the first windmills developed in Persia to pump water and grind grain around 500 BCE. They’re even remote from the more recent Dutch windmills that drained parts of the Rhine River delta in the 14th century. The first electricity-generating wind turbine was built in rural Scotland in 1887 by Professor James Blyth in the garden of his holiday home. From there, it took nearly 100 years until the first multi-megawatt wind turbine was built.

The first electricity-generating wind turbine was built in rural Scotland in 1887.

For decades, wind power was merely seen by fossil fuel-addicted societies as the supply of last resort, shadowing the evolution of fossil fuels long through the 20th century. During the 1970s oil crisis, wind finally gained a bigger foothold, as it became a key bridge fuel for the United States with millions of dollars invested by the federal government to make the energy source commercial.

Today, wind capacity is increasing at an incredible rate, with offshore capacity forecast to increase threefold to 65 gigawatts in 2024, say the International Energy Agency (IEA). That’s fueled by a drastic decrease in the price of generating wind power, with contracted costs dropping to record lows worldwide.

Image: The vertical-axis Nashtifan windmills in Northern Iran, dating back 1,000 years to ancient Persia, are still used for milling grain into flour. Most modern windmills have horizontal axes.

For the United States, which vowed never again to be subject to oil shortages, wind is proving a popular contributor. From less than 1 percent of the country’s electricity generation in 1990, it accounted for 7.3 percent in 2019. At the same time, global generation has increased from 3.6 billion kWh of electricity in 1990 to 1.13 trillion kWh, according to the International Energy Agency.

The inflection point between BCE and CE was a time of great development.

While Professor Blyth and his contemporaries inarguably laid foundation for the later development of wind power, historians have, at times, as much as described their inventive efforts as failures. The reasoning behind the critique is that for decades, wind power remained a niche alternative to fossil energy as well as the world’s dominant source of renewable electricity: hydropower.

Trickle-down hydropower

The inflection point between the timeframe we call BCE, or Before Common Era, and CE, or Common Era, was a time of great development. Great works of literature were being written down for the first time, while others were being performed on stage. Societies in Greece and Rome developed the first ideas of accountability, politics and patronage, and Aristotle came up with the word enérgeia, which could be translated into “being at work”.

Ancient civilizations waged wars and developed technology that would come to be used in our homes and businesses, such as concrete, air conditioning and vending machines. And the most innovative Greeks also recognized the power of the thing that accounts for 70 percent of our planet: water.

The roiling current of a river and its tributaries was recognized as its own form of elemental energy, and enterprising Greeks were keen to try and harness it. They developed the first water wheel to grind grains into flour, and ended up inventing the idea of the industrial process. It was a leap forward in efficiency, and a way to ease the strain on human beings.

However, soon after, individuals recognized that combining the two main sources of power up to this point – water and fire – could create something even more powerful: steam. Steam-powered processes replaced water mills across the globe, and for many centuries, hydropower gave way to steam.

The cost-cutting narrative has dominated the tale of renewables for decades.

Today, we’re recognizing once more the importance the planet’s plentiful supply of water can play in our energy mix. Hydropower is currently the main source of renewable power worldwide and will continue to be through 2024. Three enormous projects in China and Ethiopia due to come online in the coming years will account for a quarter of the growth in hydropower capacity worldwide, according to the International Energy Agency. Those megaprojects will be on the scale of the Three Gorges Dam in China, which is currently the largest power plant in the world.

Image: The norias, early hydropowered machines, were designed during the Byzantine era. Some still remain in Hama, Syria (on the left).

Hydro energy, however, does have its limitations: While engineers are trying to build fish-safe hydropower turbines, current hydropower plants are known to cause harm to aquatic ecosystems. Experts also note that in order to significantly scale the use of hydro energy, its costs need to be driven down by technological development. The cost-cutting narrative, of course, has dominated the tale of renewables for decades, as a 1995 New York Times article, titled“70’s Dreams, 90’s Realities; Renewable Energy: A Luxury Now. A Necessity Later?”, highlights.

In the article, journalist Agis Salpukas described how according to energy experts, “the so-called renewable sources may well survive only as window dressing for utility company annual reports,” because of price competition. “Renewables are a luxury that belt-tightening utilities can no longer afford, executives complain, particularly now that natural gas is so plentiful and gas-fired generating plants so inexpensive to operate,” Salpukas wrote.

Salpukas further noted, however, that “the decline of renewables carries long-term risk, for example, if global warming becomes something more than just a threat.”

Luckily, not all companies lost faith in renewables in the 1990s but made significant technological advancement long before global warming became primetime news.

All hail the sun

“The sun emits in two minutes the energy the world needs for one year,” says Neste’s Perttu Koskinen. “There’s enough energy coming from the sun, if we can just harness it the right way.” For millennia, we’ve been looking for that right way.

“It was in 1954 that we discovered a way to make electricity from sunlight, and it’s taken us around 70 years to get to the point where making electricity from the sun is almost the least expensive source of energy you can have,” says Sally Benson, co-director of Stanford University’s Precourt Institute for Energy, and director of the university’s global climate and energy project.

That invention, the development of the first practical solar cell made using silicon, was announced by Bell Labs at a meeting of the National Academy of Science. Photovoltaic (PV) cells revolutionized our world – though at the time, their efficiency was incredibly low. Just six percent of the energy taken in by the first PV cells was converted into usable energy. But it was a good sight better than the way we had previously used the sun as a society.

The most likely scenario is a systematic replacing of fossil fuel inputs with solar fields.

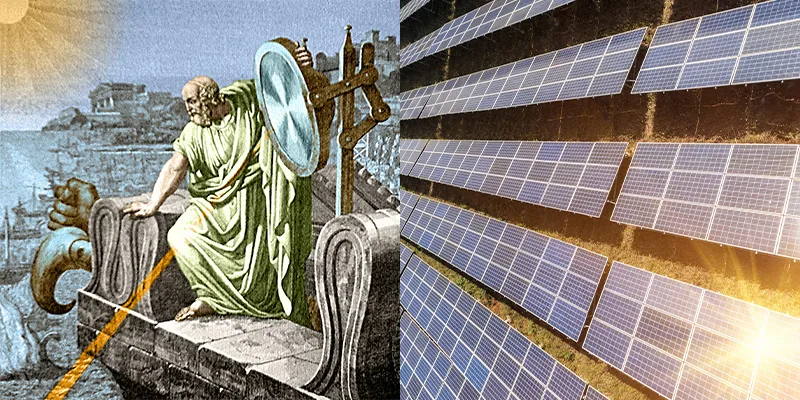

In the seventh century BCE, fires were started by focusing the broad rays of the sun into finetuned beams of light using glass lenses. Within 400 years, the Greeks, Romans and later, Chinese had developed an elaborate maze of mirrors that would bounce and focus sunlight into a meaningful beam.

Image: In ancient Greece, an invention called Archimedes’ death ray used solar power for a devious cause: focusing sunlight on ships, causing them to burst into flames.

Our historical architects began to realize they could harness the power of the sun – after a fashion. They would build their homes to act as sun traps, heating rooms on south-facing slopes where they could guarantee a decent amount of time in the sun.

Our centuries of progress with solar power finally culminated in a breakthrough in the middle of the 20th century, as the first commercial PV cells were invented. After that, development was rapid.

Between 1957 and 1960, the panels’ efficiency leapt from eight to 14 percent; by 1985, solar cells could convert a fifth of the sun’s energy into usable power. By the turn of the millennium, that was 33.3 percent, and has inched up further. In the same time period, solar panels have powered space satellites and airplanes, all while reducing our reliance on fossil fuels. That’s likely to continue, reckons Arizona State University’s Jones, with solar power the most probable use of a pre-historic energy source. “I think the most likely scenario is not really a return so much just to what came before, but rather a large-scale systematic replacing of fossil fuel inputs with solar fields,” he says.

That will require a further reduction in costs though, says Benson. “Even though solar and wind are now very affordable and excellent technologies, and they can and will continue to scale, there are still challenges to becoming 100 percent reliant on renewable resources until we can deal with the challenge of seasonal scale energy storage,” she says. “Right now, we don’t really have good ways to do that. It would have to be like, 20 times cheaper than it is today.”

It’s a tale as old as time that we face energy innovations with disbelief.

The missing link might be called Power-to-X. It’s a technology platform with potential to solve both the storage challenge of renewable electricity as well as an even more devious problem: how to get rid of crude oil use in the production of plastics, chemicals and fuels, for example. Power-to-X, or P2X, as insiders call it, uses electrolysis powered by renewable electricity to produce renewable hydrogen from water by splitting water molecules. Renewable hydrogen is reacted with carbon dioxide into various products: fuels, chemicals and building blocks for plastics. Even edible proteins can be produced by microorganisms utilizing hydrogen and carbon dioxide. To put it simply, Power-to-X converts what is practically plain air into widely usable, storable commodities with the help of hydrogen produced from water by renewable electricity.

While the technology may sound utopian, Neste and other leading companies around the world are actively developing it to full-scale commercialization within a 10-year time horizon. And it’s a tale as old as time that energy innovations are faced with disbelief: Around 130 years ago, when windmill inventor James Blyth offered to light the main street of his home village in Scotland using current from his cloth-sailed wind turbine, his fellow villagers reportedly rejected, considering the electric light to be “the work of the devil.”

The future?

When the history books – or e-books – come to be written on our time on this planet, what will they say? How will we have changed the way we live – and how will we have changed our planet? Will we fully shake off the temporary “blip” that was the fossil energy era? And is the return to our prehistorical energy sources an unvarnished good thing?

Not necessarily – at least not without care, say the experts. We’re turning to biomass, to solar panels and to wind turbines in greater numbers, but we need to be aware of their impact. They demand space on an increasingly crowded planet, and we run the risk of putting them in disruptive spaces.

Furthermore, we’ve become much more energy intensive, greedily gobbling up all the power we produce. There’s never been such a high demand on our planet, and U.S. Energy Information Administration projects that world energy consumption will grow by nearly 50 percent between 2018 and 2050, led by demand in Asia.

Will we level the curve sufficiently quickly to avoid major disruption to civilization?

Just as our ancestors are said to have buried the bodies of their dead to decompose in the earth and give back energy to nature, so we have to again enter a circular energy economy, in which we give back what we take in equal measure.

And currently, the energy transition from the fossil era to carbon neutral is not happening nearly fast enough to meet the Paris climate agreement’s target of limiting global warming to well below 2°C. For example in transportation, scientists agree on that even a rapid shift to electric vehicles would alone not be enough to hit the goal at this point, especially in freight. 2020 is the decade of action, and humankind needs to take all action possible right now to cut fossil carbon emissions. In terms of the transport sector, which accounts for around a quarter of global greenhouse gas emissions, sustainable liquid and gaseous biofuels as well synthetic fuels via Power-to-X are all needed in addition to electrification to replace crude oil.

But Benson believes the history books will look kindly on us. “People will say: ‘What they didn’t realize was in the course of creating this massive modernization, improving lives and growing population, they didn’t realize soon enough that by burning fossil fuels they were going to change the climate.’ And then I think they’ll say, ‘At some point they realized, and they made the changes that were necessary to avoid runaway warming.’”

A large part of that needs to be driven by technological development. “We are on the verge of that, and it’s something we are very much doing at Neste to find the best solutions to make this an affordable, sustainable process,” Neste’s Perttu Koskinen says. “There’s a big transition in the whole energy sector, caused by climate change of course, but also the technology advances in renewable fuels, chemicals and electricity.”

Benson reckons we have a bigger question to ask as a society: “Will we be able to say we levelled the curve sufficiently quickly to avoid major disruption to human civilization and ecosystems?”

That’s what waits to be seen.